Everything You Need to Know About 4K HDR TVs

High dynamic range, or HDR, can make your TV's picture more lifelike—but not all sets do it well

When you shop through retailer links on our site, we may earn affiliate commissions. 100% of the fees we collect are used to support our nonprofit mission. Learn more.

If you’re in the market for a new TV, there’s a good chance it will be a 4K set, or even an 8K model, with a feature called high dynamic range, or HDR for short.

You should be particularly excited about HDR, which is now found in the vast majority of midsized to large sets. TVs that do a good job with HDR video can present brighter, more vivid images with greater contrast and a wider array of colors, much closer to what we see in real life. (By comparison, 4K and 8K displays provide greater picture detail, but not everyone notices the difference.)

CR’s testers have found that not all TVs with HDR perform equally well. In fact, it has become one of the key differentiators among the sets in our TV ratings.

What Is HDR?

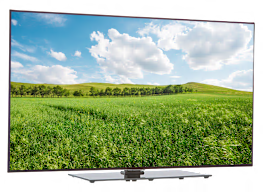

In music, high dynamic range refers to the difference between the softest and loudest parts of the composition. In video, it’s about increasing the contrast between the brightest whites and the darkest blacks a TV can produce.

“When done well, HDR presents more natural illumination of image content,” says Matt Ferretti who heads the Consumer Reports TV testing program. “Though HDR demands a higher peak brightness from the TV, it doesn’t mean it has to present a blindingly bright image to the viewer. It simply means the TV has the brightness headroom needed to present the various elements in an image—a shadowy cave, sunlit facial highlights, a brightly lit lightbulb—at the brightness level that is required.”

When HDR is at work, you’ll notice the texture of the brick on a shady walkway or subtle shading in the white clouds in a daytime sky. With an expanded dynamic range from white to black, you’re able to see more nuance, in both bright and dark areas of an image.

Manufacturer Manufacturer

Not All HDR TVs Deliver on the Promise

Our tests show that not every TV with “HDR” written on the box produces equally rich, lifelike images.

First of all, TVs are all over the map when it comes to picture quality, HDR or no HDR. But there are also challenges specific to this technology.

Most notably, a TV must have a bright screen to really deliver on HDR. To understand why, you need to know your “nits,” the units used to measure brightness.

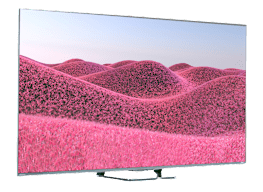

Better-performing HDR TVs typically generate at least 600 nits of peak brightness, with top performers hitting 1,000 nits or more. (There are now some high-end LCD/LED TVs that claim up to 3,000 nits of peak brightness, though most content is still mastered at 1,000 nits.) But many HDR TVs produce only 200 to 300 nits, not nearly enough to deliver an HDR experience.

With an underpowered TV, the fire of a rocket launch becomes a single massive white flare. With a brighter television, you’d see more intense, lifelike flames, as if you were really there.

“The benefits of HDR are often lost with mediocre displays,” Ferretti says.

How to Tell a Great HDR TV From a Bad One

Unfortunately, you can’t just read the packaging—or even rely on how the picture looks in the store.

You can’t rely on a TV’s claim of peak brightness, either. Most of those measurements are recorded using a standard industry test pattern, called a 10 percent window, that evaluates the brightness of a small box against a completely black background. But companies can use other methods instead, to produce inflated peak brightness numbers.

What to do? If you’re a CR member, check our TV ratings and buying guide. We have separate scores for UHD picture quality and HDR performance.

We measure brightness differently from the testers at most other organizations. You know that 10 percent window pattern? While we do use this pattern in our tests, we don’t think it’s a realistic way to determine a TV’s brightness during a regular TV show or movie. That’s why Consumer Reports developed its own brightness test patterns, placing that white 10 percent window against a background of moving video. That gives us a much better idea of the set’s real brightness when actually playing content.

If you look through our ratings, you’ll see that the TVs with the best HDR often tend to be the priciest. But there are also some good choices for people who want to spend less. We think HDR performance will continue to be the big differentiator among 4K TVs throughout 2025, but don’t be surprised if more lower-cost sets start to deliver a satisfying HDR experience, too.

The Different Types of HDR

When you shop for a TV, you may see references to different kinds of HDR. There are several variations on the technology. It can be useful to understand these different HDR flavors, but if it’s more information than you want, don’t worry. TVs with any type of HDR can work well.

HDR10 has been adopted as an open, free technology standard, and it’s supported by all 4K TVs with HDR, all 4K UHD Blu-ray players, and all HDR programming.

Many TVs, including models from Amazon, Hisense, LG, Roku, Sharp, Sony, TCL, and Vizio, also offer Dolby Vision, an enhanced format that works a bit differently from HDR10. It’s also available on some Amazon Fire TV, Apple TV, Google TV, and Roku streaming players, and from streaming services, including Amazon Prime Video, Apple TV+, Disney+, Hulu, Netflix, and Paramount+.

Among its advantages, Dolby Vision supports “dynamic” metadata, which allows the TV to adjust brightness on a scene-by-scene or frame-by-frame basis. By contrast, HDR10 uses “static” metadata, setting brightness levels once for the entire movie or show.

There’s also a competing technology, called HDR10+, that uses dynamic metadata much like Dolby Vision. It was developed by Samsung, Panasonic, and 20th Century Fox, and is available in some 4K TVs from Hisense, Roku, Samsung, TCL, Toshiba, and Vizio. It’s also supported in some Amazon Fire TV, Google TV, and Roku streaming players.

You can find TV shows and movies in HDR10+ on Amazon’s 4K Prime Video streaming video service, Apple TV+, Hulu, Paramount+, Google Play Movies, and YouTube. Disney+ says it’s planning to launch additional HDR10+ titles this year. It can also be found in some 4K UHD Blu-ray discs, mainly from 20th Century Fox and Warner Bros. Netflix doesn’t support HDR10+ right now.

A growing number of TVs now support both these dynamic HDR formats, though LG only supports Dolby Vision and Samsung only supports HDR10+.

Both Dolby Vision and HDR10+ have offshoots of their respective technologies—called Dolby Vision IQ and HDR10+ Adaptive—that can be found on some newer TVs. They use the built-in light sensors found in many TVs to dynamically adjust the brightness, contrast, and color of images based on the ambient brightness in your room.

One advantage of the dynamic metadata in both Dolby Vision and HDR10+ is that it can help a midlevel TV that doesn’t have the brightness levels of a top-tier model adapt the content to the set’s limitations. Using a process called “tone mapping,” the metadata can guide the TV to make scene-by-scene or frame-by-frame adjustments according to brightness, color, and contrast variations in the content.

Many TVs now also support one more HDR format, called HLG, short for hybrid log gamma. It’s currently used by DirecTV and some pay TV operators, and it’s one of the formats that will be used in the new Next-Gen TV over-the-air TV broadcasts, technically called ATSC 3.0.

Many newer TVs have built-in support for HLG, and others can receive it via firmware updates if necessary.

Yes, that all sounds complicated.

But there’s some good news. First, your TV will automatically detect the type of HDR being used in a given movie or show and choose the right way to play it. (Often you’ll see a little flag on the TV screen showing the type of HDR that’s playing.) No fiddling required.

Second, as noted above, the type of HDR doesn’t seem to be too important right now. Based on what we’ve seen in our labs, a top-performing TV can do a great job with any of these HDR formats.

Our advice: Instead of fretting over the type of HDR, simply buy the best TV you can, especially because TV manufacturers can update their sets to support additional formats if they become more popular.