Can Technology Read Your Emotions?

It could help companies sense your moods to make cars smarter, ads more targeted, and customer service more empathetic. Or it may not work at all.

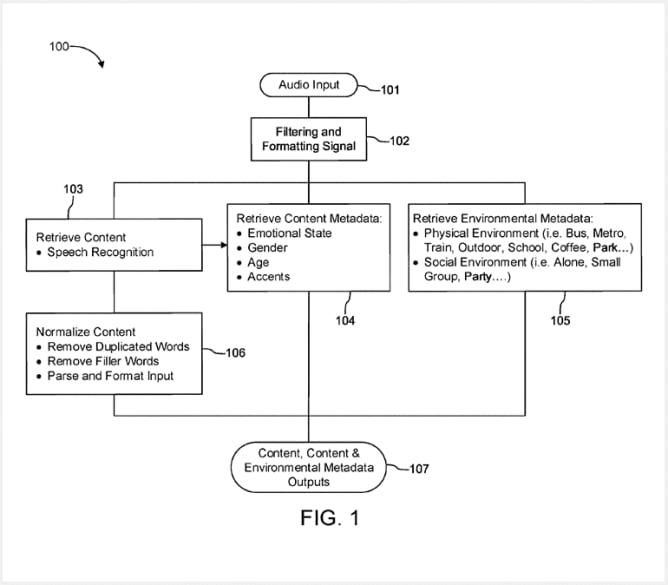

Earlier this year, Spotify received a U.S. patent for technology to read the emotions of people based on speech recognition and background sounds. That approval followed findings by Spotify researchers that the company could determine listeners’ personality traits based on the music they enjoy.

The possibility that a leading music service might target advertisements or recommend songs linked to a customer’s sentiments in real time itself prompted some strong emotions. More than 100 musicians, including Rage Against the Machine guitarist Tom Morello, and groups such as Amnesty International signed an open protest to Spotify’s CEO, Daniel Ek.

“This recommendation technology is dangerous, a violation of privacy and other human rights, and should not be implemented by Spotify or any other company,” the petition reads. “Monitoring emotional state, and making recommendations based on it, puts the entity that deploys the tech in a dangerous position of power in relation to a user.”

Source: USPTO Source: USPTO

Spotify publicly retreated, telling one of the protesting groups that it had no plans to use the speech recognition technology. Yet the disagreement highlighted the uneasiness many feel about companies using artificial intelligence to try to measure emotions.

In recent years companies like Spotify have become ever more sophisticated in developing profiles about us. Netflix sees that you tend to watch romantic comedies. Zappos keeps track of your love of expensive designer sneakers. Facebook knows a lot about us because we indicate our likes and dislikes on its platform.

But now data scientists in large companies and startups are using artificial intelligence technology to try to figure out ways to understand your emotions and state of mind in real time. Unless you pour out your heart over the phone or stand in front of a salesperson broadly grinning, businesses generally don’t know how you feel at the very moment you’re considering (or not considering) buying something. Until now. Maybe.

A wide array of companies want to know whether you feel joy, sorrow, fear, anger, surprise, gratitude, hate, or other sentiments. Facebook, Google, IBM, Panasonic, Sony, Honda, Samsung, Nielsen, and Xerox are among those that have filed related patent applications.

At its best, this type of technology could improve or save lives, for example, by detecting that someone is too distracted or upset to drive a car safely. Emotion sensing might detect severe depression and risk of suicide, or help people with autism understand social cues better.

At its worst, technology that reads our emotions as we experience them could create a dystopian world in which companies intrude into our innermost feelings and bombard us at every turn with advertising with individualized pricing clamoring for your attention.

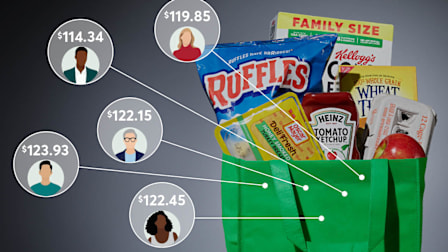

“Imagine you go to the supermarket, and it’s just like, price tags are digital. And they depend on who you are,” says Catalin Voss, a Stanford University PhD candidate in artificial intelligence who, as I wrote in 2014, co-founded a company in his teens to measure emotions through facial tracking. “That’s, that’s not the world that we want to live in.”

Setting ethical boundaries in such a brave new world promises to be complicated.

Rana el Kaliouby, co-founder and CEO of Affectiva, a leading emotion recognition company, cites the hypothetical example of her teenage daughter using her iPhone. “Say her phone can now detect that, you know, she looks like she’s depressed,” she says. “Should her phone text mom and say: ‘Hey, Jana looks depressed, you should do something about this.’ And should my daughter have a say?”

Despite these complex issues, Voss and el Kaliouby are firm believers that emotion technology will work and will benefit society. Yet other experts say we may never live in a world of computers that are acutely attuned to human feelings. Emotions vary too much across cultures and personal values, and even insights into a person’s state of mind may not be enough to predict a decision they might make.

The Seeds of a New Tracking Technology

Even if far from all-knowing, devices and algorithms with at least rudimentary abilities to sense human emotion and reactions are starting to emerge.

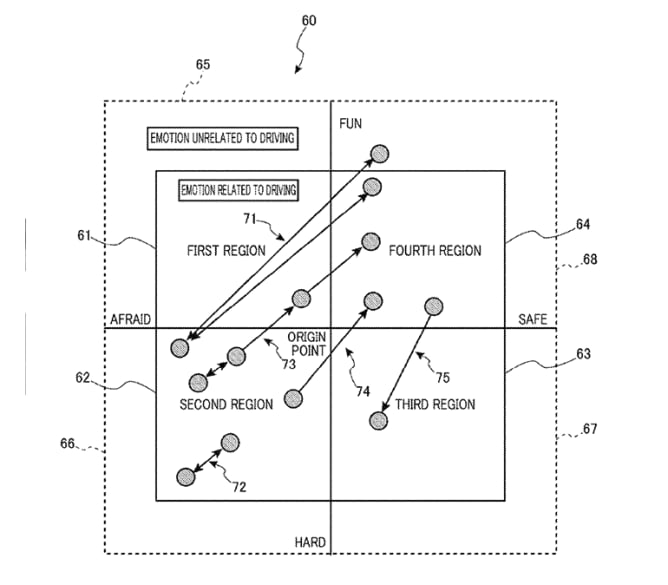

Some automakers are looking to potentially evolve systems that currently can detect some physical states, such as fatigue, into ones that can more precisely measure a driver’s emotional state, such as being too angry or sad to drive. As it stands now, some cars have technologies such as Cadillac’s Super Cruise system, which can monitor driver attentiveness through eye tracking. Other recent models from Toyota and Volvo have sensors that are supposed to detect when drivers are becoming fatigued, so the car can warn them before they doze off.

But such sensors may one day be used for more empathic purposes. Carmakers such as Honda have shown off concept vehicles that would read and react to the emotional state of their drivers. In May the Swedish company Smart Eye, which builds automotive eye-tracking technology, bought Boston-based Affectiva for $73.5 million. Affectiva’s technology seeks to understand the state of the driver and passengers, including their emotions, to improve the ride, for example, by changing the lighting, music, or temperature. Keeping passengers happy could also boost five-star reviews for those who use their cars for ride-sharing services, the company says.

Source: USPTO Source: USPTO

Outside of the automotive world, companies such as Cogito, CallMiner, and Behavioral Signals help call centers recognize emotions and route calls to different operators expert in making sales to happy people or calming upset customers.

When Amazon introduced its Halo fitness tracker wristband in 2020, the company’s medical officer wrote that the device measures how happy or sad you sound. Amazon advertises that feature today more narrowly as one that analyzes the tone of your voice.

Other industries where you may increasingly encounter emotional recognition technology are insurance (for fraud detection), advertising (to test what messages resonate best), and healthcare (to detect illness), according to a November report from the research and advisory firm Gartner.

Even businesses like casinos are working on technology to better understand a gambler’s state of mind while they’re playing. “I know of no technology that is capable of directly reading human emotions,” says John Acres, a Las Vegas inventor who helped fuel an expansion in player loyalty programs by putting tracking devices into slot machines in the early 1980s.

But “we can read data that allows us to deduce a customer’s emotional state with reasonable accuracy,” he says. This information includes how much someone wagers, how quickly, how much they win and lose, and their credit balance. Such information might help the casino determine when to send over free drinks or a cheery staffer, for example, before the player grows dissatisfied.

How It Works

The basic idea behind emotion recognition technology isn’t terribly complicated.

Clues about your emotions come from facial and body expressions, the tone of your voice, the words you speak, and biometric data such as your pulse and heart rate. To get this information, the technology relies on microphones, cameras, sensors in various places (such as cars), and devices such as smartphones.

Sometimes the signal may be fairly obvious: A louder voice might indicate anger, distress, or excitement. For example, the Amazon Halo analyzes positivity through the user’s voice. Cars typically detect fatigue by studying driving patterns, although more elaborate systems of the future may also monitor a wider array of inputs, including clues from the driver’s eyes, facial expressions, and heart rate, as well as signals from the car itself.

If the basic concept is straightforward, accurately interpreting signals from diverse people and cultures poses huge programming challenges. Just consider how difficult it is to understand what someone else’s raised eyebrow or sly smile might actually mean.

Experts warn that systems might show bias because they’re not able to properly factor in racial, gender, and other differences. For example, the author of a 2018 research paper used Microsoft AI and Face++ emotion recognition technology to study the portraits of more than 400 NBA basketball players posted on the site basketball-reference.com. Microsoft registered the Black players as more contemptuous than the white players, and Face++, which has been developed by a China-based company, found the Black players generally angrier.

For all the passionate discussion about the potential of AI, some top experts doubt the technology will ever interpret emotions accurately. Obstacles include differences between people, cultures, and circumstances. Machines may just not be able to pick up on the subtleties.

“When you don’t have the context, it isn’t going to be possible to accurately place that emotion in a bucket,” says Rita Singh, an associate research professor at Carnegie Mellon University and author of the book “Profiling Humans from their Voice.” “Machines cannot fill in the gaps like humans can.”

She says that in most cases a salesperson will be more effective than a machine. She gives the example of a salesperson who learns what Singh does for a living. “The bottom line is, I am a professor, I have money, and I can spend it, right?” she says. “In many contexts, what you say is more important than how you say it. So the underlying emotion may not even be important.”

The potential for misinterpretation and abuse has prompted some experts to seek limits on emotion recognition, and the AI Now Institute at New York University calls for an outright ban.

Some industry leaders, such as el Kaliouby, who is the author of “Girl Decoded: A Scientist’s Quest to Reclaim Our Humanity by Bringing Emotional Intelligence to Technology,” advocate regulation to prevent abuses.

“There’s incredible opportunity to do good in the world, like with autism, with automotive, our cars being safer, with mental health applications,” she says. “But let’s not be naive, right? Let’s acknowledge that this could also be used to discriminate against people. And let’s make sure we push for thoughtful regulation, but also, as entrepreneurs and as business leaders, that we guard against these cases.”

Reading Your Needs

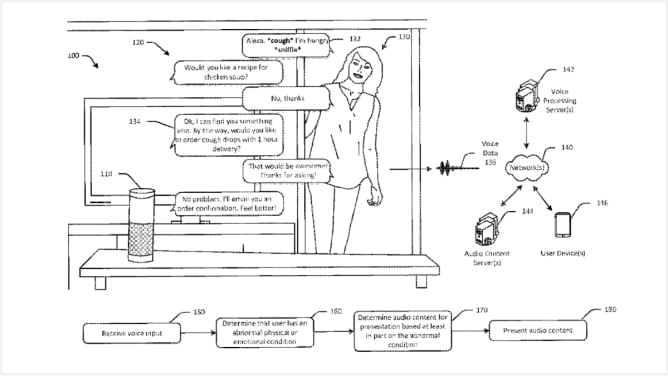

A patent application from Amazon depicts an emotionally sensitive future version of the company’s Alexa digital assistant, tuned into the coughs and sniffles of an owner who announces she is hungry.

“Would you like a recipe for chicken soup?” the device asks.

“No, thanks.”

“Ok, I can find you something else. By the way, would you like to order cough drops with 1 hour delivery?” comes the response, as outlined by two software engineers in a U.S. patent approved in 2018.

Source: USPTO Source: USPTO

Phil Hilmes, Amazon Lab 126’s director of audio technology, who has worked on Alexa and other Amazon audio technology, didn’t respond to several requests for comment on emotion recognition technology. A spokeswoman, Megg Dunlap, says that patent filings don’t necessarily reflect current product development.

Amazon also told CR that there are benefits for customers when it comes to detecting tone of voice, pointing out that Alexa offers frustration detection in the U.S. to improve the customer experience. With frustration detection, Alexa will recognize positive, negative, or neutral characteristics for each utterance, which is different from recognizing distinct emotions such as happiness, sadness, fear, anger, or surprise, the company says.

A Spotify spokesman, Adam Grossberg, also says the streaming service doesn’t have any plans to develop the patented emotion recognition technology that prompted the protest petition from musicians and groups including Amnesty International, the Center for Digital Democracy, the Electronic Privacy Information Center, and Public Citizen.

“The research we do, patents we file, and any products Spotify develops both now and in the future will reflect our commitment to conducting business in a socially responsible manner and comply with applicable law,” he says.

Even though many patent applications never result in finished products, a March report by ResearchandMarkets.com forecast that emotion recognition technology would become a $37.1 billion market by 2026.

The Emotional Toddler

Experts agree that emotion recognition technology is now at a very early stage, with lots of work ahead. El Kaliouby likens the sophistication of the technology to that of a toddler, while Voss sees it as a bit more mature. “I would say that emotion recognition at this point is like at the level of a neurotypical 9-year-old,” says Voss, who sold his emotion recognition company to a Toyota subsidiary in 2015.

“Eventually, it’ll be at the level of a 16-year-old,” he says, “and then it will be at the level of a highly intelligent 25-year-old, and then maybe it will reach the level of a psychology graduate spending a lot of time sitting in front people and trying to discern their emotional states and really understand what’s going on.”

Yet in the world of marketing, it may not matter if emotion recognition technology ever reaches even puberty. If it works well enough to boost sales or provide some product enhancement, companies will continue to invest, sometimes with out-of-this-world implications.

Engineers have already deployed an 11-pound robot, CIMON-2, on the International Space Station. It helps astronauts by taking photos and videos, navigating to various areas of the ship, and offering conversation and emotional support, according to IBM, which developed its artificial intelligence. But for now, according to Airbus Defence and Space, which developed and built the astronaut assistant, astronauts have switched it off, waiting to use it in future experiments.