Tesla Releases Latest Version of 'Full Self-Driving Capability,' but It Requires Owners to Pass a Safety Test

CR's experts say the seven-day driver evaluation underscores the system’s own limitations

Tesla owners who paid as much as $10,000 for the automaker’s "Full Self-Driving Capability” can now press a button on their car’s touch screen to download the newest beta version of the software—but Tesla will deploy it only to owners who demonstrate that they’ve been safe drivers for at least seven consecutive days. More eligible owners should expect access to the software starting next Friday, according to a tweet from Tesla CEO Elon Musk

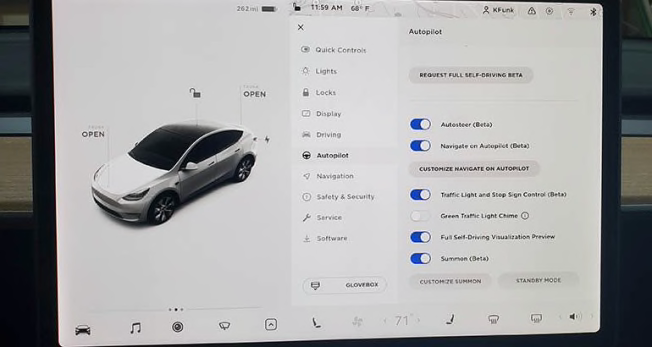

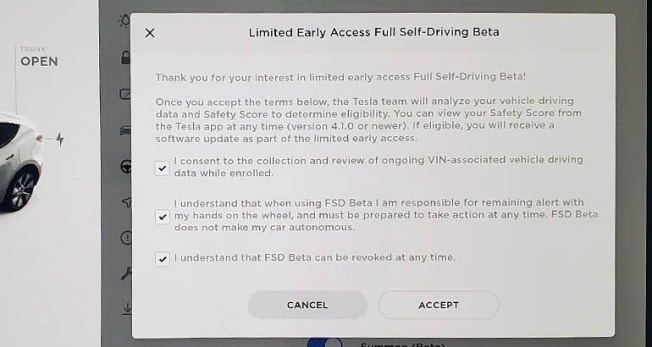

Last week, a button appeared on the vehicle’s touch screen that allows drivers to opt into receiving the beta software, although drivers will still have to meet the requirements of a new “safety score” in order to use it. The score is determined using a weighted mathematical formula involving five driving metrics, such as the frequency of hard braking and aggressive turning. The automaker can track these and other aspects of driving through data gathered by sensors on the car and sent back wirelessly to the company.

“These are combined to estimate the likelihood that your driving could result in a future collision,” the company says on the web page describing the score.

Apologies, 10.2 release will be a week from Friday

— Elon Musk (@elonmusk) September 28, 2021

Photo: Kelly Funkhouser/Consumer Reports Photo: Kelly Funkhouser/Consumer Reports

Consumer Reports’ Model Y received the update at 6 a.m. on Saturday, and we pushed the button to receive the latest version. We began our seven days of consecutive “safe” driving Saturday and have not yet seen any indication of our safety score on our Tesla smartphone app or on the car’s touch screen. Once we receive the upgrade (and presumably see our score), we’ll evaluate the new FSD version for consumers.

It’s unclear whether drivers accepted into the program after seven days of safe driving can later lose access to FSD 10.1 because of a low safety score.

Fisher said requiring drivers to achieve an acceptable safety score only reinforces that FSD is not ready for public roads. He also said that a better way to ensure safety would be for Tesla to use a driver monitoring system to make sure drivers are engaged when FSD or Autopilot, a sister suite of driver-assistance features that can automate some steering, braking, and acceleration functions, are being used.

“Requiring an adequate safety score to use this developing system underlines the fact that it may not be safe to use,” he says. “Only allowing certain people who have already paid for this feature to experience it seems to be a clear admission of the risks of using the system.”

Photo: Kelly Funkhouser/Consumer Reports Photo: Kelly Funkhouser/Consumer Reports

As with previous updates, CR’s experts are concerned that Tesla is still using its existing owners and their vehicles to beta-test new features on public roads. This worries many automotive safety experts because other drivers, as well as cyclists and pedestrians, are unaware that they’re part of an ongoing experiment.

In 2020, Consumer Reports tested Autopilot and some FSD features and found that Autopilot lagged behind other similar systems in keeping drivers engaged with the driving task, and that FSD occasionally required a driver’s quick intervention to prevent a crash.

Tesla did not immediately respond to a request for comment on Saturday. The manufacturer hasn’t responded to any requests from our reporters since May 2019.

Musk has claimed over the years that FSD, together with Autopilot, would eventually make a Tesla fully self-driving and that the autonomous vehicles would be safer than human drivers. He also has said that Tesla owners could make money by hiring out their newly autonomous vehicles as robotaxis. Musk has missed self-imposed deadlines for delivering the promised autonomy.

Driver Monitoring Is Key

Fisher points out that the safety score criteria do not include a metric related to acceleration, an attribute that the high-performance Teslas are known for. Fisher also points out that data on braking and other factors can easily be misinterpreted, such as when a driver brakes moderately hard to avoid a driver running a stop sign. It might signal a driver as unsafe when they’re actually making a good decision that prevents a collision. “I’d hate for a Tesla driver to be encouraged to accelerate to try to make a green light in an effort to avoid rapid deceleration that could cost them the chance at being approved for the update," Fisher says. "Rather than focus on driving habits, Tesla should focus on the driver’s attention to the road."

Fisher points out that many Tesla models already have driver-facing cameras that, with the right software, could determine factors such as mobile device usage and whether the driver is looking at the road when FSD or Autopilot is engaged. It also could give warnings in the same way that General Motors’ Super Cruise system monitors drivers when it is engaged. Super Cruise can automate driving tasks, such as steering, braking, and acceleration on certain pre-mapped highways, and allows drivers to take their hands off the wheel for extended periods of time.

Super Cruise monitors driver attention by using a camera aimed at the driver that makes sure they’re paying attention to the road when the system is switched on. If a driver’s attention wanes, steering wheel lights flash and the car will eventually shut down if a driver does not respond.

Autopilot uses a steering wheel touch system to determine whether a driver has their hands on the wheel, which does not necessarily mean they’re paying attention to the task of driving.

Editor’s Note: This article, originally published on September 25, 2021, has been updated with information from Tesla CEO Elon Musk regarding the availability of Full Self-Driving Capability.