The investigations are far from complete, but enough details are emerging about several high-profile crashes involving Tesla and other company advanced driver-assist systems to draw important lessons about the limits of the current technology.

Driver-assist systems that can keep pace with the traffic flow and keep a car centered in its lane are becoming more commonplace, says Jake Fisher, director of auto testing at Consumer Reports.

But as drivers become more comfortable with these features, they might mistakenly believe the cars can operate independently. In reality, drivers always need to be alert behind the wheel, Fisher says.

"As these systems become more capable, they're actually becoming more dangerous," he says. "Once a driver and vehicle make several trips using the features without incident, it's human nature for them to stop watching the road and other cars as intently as they should."

Since January, two Tesla vehicles whose drivers were using its Autopilot system have struck the backs of stopped fire trucks, and another Tesla crashed into a roadside barrier, killing its driver. In March, an Uber self-driving test vehicle struck and killed a pedestrian in Arizona.

Though there are detailed technical reasons that explain the limitations of safety systems, the recent crashes and deaths illustrate several important lessons for human drivers using and relying on driver-assist features, such as automatic emergency braking (AEB) and adaptive cruise control (ACC), among others:

Lessons Learned

- Drivers must pay attention: People using advanced driver-assist systems like Autopilot must always pay attention to the road. The National Transportation Safety Board report released this week about a fatal Model X crash in California in March shows that Autopilot can't be relied upon to stop, turn or accelerate when appropriate because of the limitations of its programming. Despite its name, Autopilot operates only as a suite of driver-assist features.

- It's not just Tesla: Cadillac, Infiniti, Mercedes-Benz, Nissan, and Volvo offer systems similar to Autopilot, under various names. These systems, such as Volvo's Pilot Assist, can maintain a vehicle's place in the flow of traffic and keep it within the lines of its lane—and that could lull drivers into complacency. Autopilot isn't the only system that has these limitations, and all of them should only be used with the driver's full attention to the road. Only Cadillac's Super Cruise has a driver-facing camera that will issue warnings if the driver stops looking at the road.

- Pedestrian detection still needs work: This important technology is still in its nascent phase, as evidenced by an Arizona crash when a self-driving Uber test vehicle killed a woman pushing her bike across the road. Uber's software reportedly identified the woman as an object, then as a vehicle and finally as a bicycle. Even though the modified Volvo SUV's systems identified an object ahead, it did not alert the human test driver to the situation, and it didn't stop the vehicle on its own.

- Automatic Emergency Braking has limits: Though effective in important ways, this feature can't save drivers in every situation. AEB typically won't keep a car from crashing at high speeds. It works to slow down a vehicle and lessen the force of impact. That's still a potentially life-saving difference, but it's not a magic bullet for avoiding a collision. Multiple crashes every day, minor and serious, show that drivers can put too much faith in AEB.

- Sudden changes can put drivers at risk: Several Tesla crashes follow a common scenario. A Tesla vehicle operating on cruise control is following another vehicle. The lead vehicle suddenly leaves the lane to avoid something ahead that's stationary or moving slowly. The Tesla driver-assist systems don't have time to react to the object suddenly in its path, such as a stopped fire truck, and there's a collision.

- Adaptive Cruise Control will do what drivers ask of it: Cars using this driver-assist system often accelerate to the driver's preset speed preference when a slower lead vehicle veers out of the way, even if there's an object in the way, until and unless it detects that object. The NTSB reported this week that the Tesla Model X in the fatal California crash in March accelerated just before it crashed into a road barrier.

- There may be a test car on the road with you: The Uber crash in Arizona underscores how few standards there are for the testing of self-driving cars, and how states and the federal government are currently giving companies license to determine whether their technology is safe enough to test on public roads.

The NTSB released details Thursday about a March 23 crash in Mountain View, Calif., involving a Tesla Model X whose driver was using Autopilot. It was previously reported that the driver, 38-year-old Walter Huang, used Autopilot continuously for 19 minutes before the crash. The NTSB confirmed what Tesla had previously asserted, that Huang didn't hold the steering wheel for the final six seconds before the crash. In the last minute before the crash, Huang held the steering wheel three separate times for 34 seconds total, the safety board said.

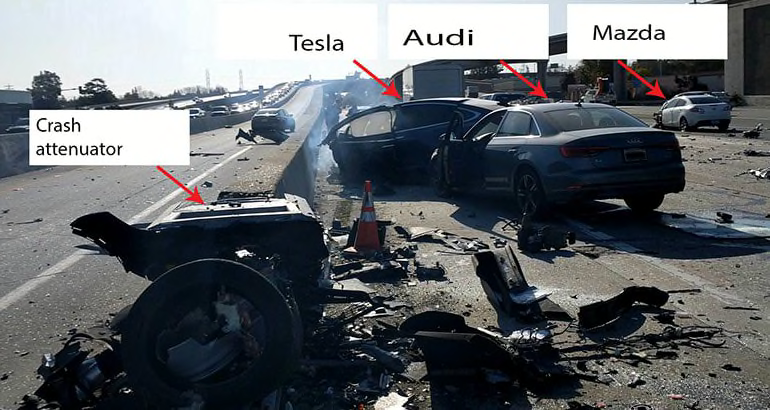

That NTSB preliminary report says that the Model X was following a car that veered out of the Tesla's lane about four seconds before the crash. Three seconds before the crash, and until impact, the Model X sped up from 62 to 70.8 mph. A crash barrier, known as an attenuator, was in the way, but there was no pre-crash braking or evasive steering, according to the NTSB report.

Attenuators, specialized road barriers made of energy-absorbing materials, are designed to lessen the impact of fast-moving cars. But this attenuator was already partially crushed from a previous crash. Huang was found belted in his seat at the crash scene. Bystanders removed him before the Model X was engulfed in fire. He died later at a local hospital.

Tesla warns drivers, in its owners manuals and with dashboard lights, that they must keep their hands on the steering wheel and pay attention to the road when Autopilot is engaged. Because it's not a fully self-driving system, the driver is still responsible to take over when needed. This can lead to tricky and dangerous hand-offs in emergency situations. In the March crash, the system hadn't warned Huang to take the wheel in the final 15 minutes before the crash, the NTSB said.

Limits of Technology

Many automakers working to create effective self-driving car technology are banking on the redundancy of multiple types of sensors—cameras, radar and laser-based lidar—to weed out false positives (indications of obstacles that aren't there) and arrive at correct decisions as these cars move about on public roads.

Tesla has been in the minority of companies, betting that cameras and radar will be enough. Tesla CEO Elon Musk has been outspoken about this, arguing that lidar (a laser-based radar system that can create detailed maps of roads) is expensive and its effectiveness is overrated. That's one reason he says Tesla vehicles sold today have the hardware needed for future full autonomy, and the main obstacles are perfecting software and regulatory approval.

The crashes involving Tesla vehicles striking stationary objects—the crash attenuator in California, a stopped fire truck in Utah in May, and another fire truck in California in January—show the limitations of relying on just cameras and radar, says Raj Rajkumar, director of the Connected and Autonomous Driving Collaborative Research Lab at Carnegie Mellon University in Pittsburgh. Camera-based systems have to be trained to recognize specific images, and if they encounter something in the real world that doesn't match their expectations, the radar has to pick it up, Rajkumar said.

Tesla's system missed the fire trucks, and there was also an incident reported in China where a Tesla crashed into a stopped garbage truck. The company's technology appears to work well with moving objects, but not stationary ones, Rajkumar said.

"Consumers need to be extremely cautious about the claims being made," Rajkumar said. "There's a lot of hype."

Building Boundaries

Last year, the NTSB completed its investigation of the fatal 2016 collision of a Tesla Model S and a semi-trailer blocking a Florida highway. The safety board found that Autopilot worked as intended, in that the system wasn't programmed to pick up large stationary objects blocking the road. However, the board faulted Tesla for allowing its technology to be used on roads that could predictably present such hazardous situations.

General Motors has taken a different approach with its Super Cruise driver-assist system. That only works on the 130,000 miles of limited-access highways (in the U.S. and Canada) for which the company has generated lidar-based, high-definition maps.

Tesla has never provided detailed data to the public demonstrating the conditions under which Autopilot can safely operate, says David Friedman, director of cars and product policy and analysis for Consumers Union, the advocacy division of Consumer Reports.

"Tesla's driver-assist system is allowed to operate under situations where it cannot reliably sense, verify and react to its surroundings in a safe manner," Friedman said. "Tesla hasn't made sufficient changes to its system to address the NTSB's concerns."

Company Responses

After the April self-driving crash in Arizona, Uber suspended its testing operations. It says it has pulled its testing vehicles out of Arizona for good, but it intends to resume testing in California and Pennsylvania. It also has hired a former chairman of the NTSB, Christopher Hart, as a safety adviser.

Tesla has said that limitations of Autopilot, adaptive cruise control, and automatic emergency braking are laid out in its owners' manuals. Adaptive cruise control cannot brake or accelerate in response to stationary vehicles, especially when traveling over 50 mph. AEB is designed to reduce the severity of an impact, not to avoid a collision, the company says, and Autosteer won't steer a vehicle around objects that jut into the driving lane.

In response to questions about the NTSB preliminary report about the California crash, a Tesla spokeswoman referred Consumer Reports back to the company's statement posted on March 30.

"The safety of our customers is our top priority, which is why we are working closely with investigators to understand what happened, and what we can do to prevent this from happening in the future," the statement reads. "Tesla Autopilot does not prevent all accidents—such a standard would be impossible—but it makes them much less likely to occur. It unequivocally makes the world safer for the vehicle occupants, pedestrians and cyclists."

Clarification: A previous version of the headline for this article suggested that Tesla has self-driving technology in its vehicles. Tesla's Autopilot system is currently just a suite of driver-assistance technologies.